Azra Dhalla, Director of Health AI Implementation, outlines critical need for safe AI implementation in health care

Across Ontario hospitals, intensive care units (ICU) have advanced technologies and skilled experts who can save lives. But sometimes, doctors and nurses on inpatient units can’t predict when a patient’s condition will become worse, meaning some patients may come to the ICU too late. To solve this, in 2020, St. Michael’s Hospital, a part of Unity Health Toronto (UHT), deployed CHARTWatch, an AI tool developed by UHT with support from the Vector Institute. CHARTWatch uses patient data to determine which inpatients are most at risk of escalating to the ICU or dying, helping health care teams make informed decisions faster. CHARTWatch has already demonstrated significant success. Even during the pandemic, it reduced ICU escalation and death by over 20 percent. Annually, it’s estimated that 100 people’s deaths were avoided thanks to this use of AI. Hospital staff also report that it has relieved stress and workload for them, enabling them to focus on the patients who need it most.

As Vector’s Director of Health AI Implementation, I’ve seen positive outcomes like these happen when integrating AI into our health care spaces. When properly implemented, AI can provide preventive and personalized health care solutions that can improve patient outcomes and enable system-level efficiency. But as we deploy AI into our health systems, it is crucial that we focus on doing so in a safe and trustworthy way. By focusing on safe implementation, we can truly tap into AI’s transformative potential while mitigating risks for health care practitioners and patients.

The need for safe AI implementation in health

The expanding role of AI in health care brings up a range of challenges and considerations for its safe deployment. Privacy, data security, and bias are understandable concerns among health care practitioners and patients. Canadian health care practitioners often face barriers when it comes to accessing health data for research, especially those who want to utilize the potential benefits of AI. Privacy-enabled and secure access to patient data are imperative to successfully implementing AI in health. Additionally, safeguarding patient privacy while ensuring interoperability and safe data-sharing practices between health care providers and AI practitioners is crucial to unlocking the full potential of AI in health.

Incorporating checks and balances throughout the development and deployment process for AI models can mitigate potential risks and ensure that AI safely benefits our health care systems, practitioners, and patients. To this end, Vector Institute developed a Health AI Implementation Toolkit for anyone looking to deploy AI models into clinical environments. Drawing on expertise from the Vector community, the toolkit is a comprehensive resource that offers a step-by-step guide towards AI implementation, punctuated by real-world examples, easy-to-follow checklists, and safe and trustworthy AI considerations.

Addressing safe AI challenges

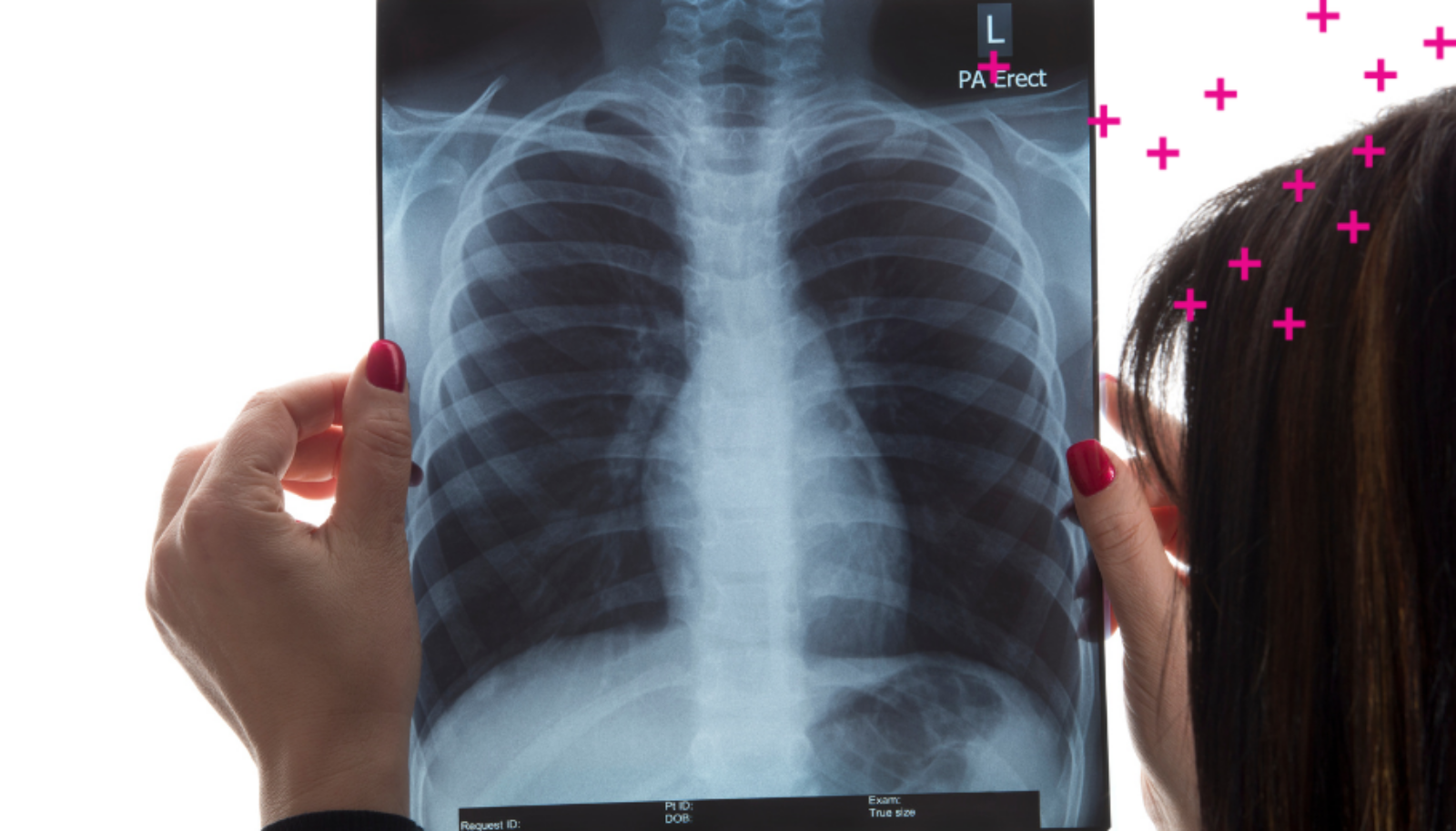

Another significant challenge in AI implementation is addressing bias in AI algorithms and datasets. Back in 2021, Laleh Seyyed-Kalantari and her colleagues discovered that an AI model with which they were working was under diagnosing traditionally underserved groups, including Black and low-income patients, and patients without health insurance. Further studies not only confirmed their findings, but showed that the model would actually perform against these groups, incorrectly diagnosing diseases for historically underserved groups as being at the same rate as the overall population, even where actual rates may be higher or lower. “If you build an AI model that goes into practice and then it fails to provide equality for the entire population, people will lose their trust in the system,” says Seyyed-Kalantari, Assistant Professor at York University and previous Vector postdoctoral fellow. Seyyed-Kalantari’s research highlights the disparities that can arise, especially for traditionally underserved populations, and the negative impact on accurate diagnoses and access to health care resources, when AI models are deployed incorrectly. It’s critical that bias in data is considered from the start and doesn’t stop after a model is deployed.

But with Vector’s toolkit, users can ensure they are checking all the boxes when it comes to addressing:

Data security – by implementing robust security measures to safeguard patient information, and comply with privacy regulations, like the Personal Health Information Protection Act (PHIPA).

Model performance – by monitoring and evaluating constantly to ensure model performance over time.

Bias in AI – by regularly auditing and evaluating AI systems for fairness and equity using tools like Vector’s CyclOps, a set of evaluation and monitoring tools that users can apply to develop and evaluate sophisticated machine learning models in clinical settings.

By incorporating best practices for data privacy, bias mitigation, and ongoing model maintenance as outlined in the toolkit, users can carefully implement AI in ways that prioritize patient safety and contribute to the advancement of equitable and effective health care outcomes.

Empowering health care practitioners and improving patient outcomes

I believe we are moving toward a time where we will see change in our health system for the better. We have a unique opportunity to apply AI research and solutions to modernize health care and address the challenges we are facing in our health system. Recognizing the potential of AI to transform patient care, it is essential that we embrace this technology rather than shy away because of the risks. We have been dealing with an overburdened health system for so long now; if we have responsible AI that can be deployed sustainably at scale, we’ll be able to make better health-care decisions that will serve Canadians better.

Already we’ve seen that AI implemented in health can:

- Assess risks in patients by determining which inpatients are most at risk of escalating to the ICU or dying.

- Improve care for people living with congestive heart failure by collecting data from wearable devices cutting hospitalizations in half and enabling nurse coordinators to support six times the number of patients compared to before.

- Improve cancer care through machine learning by enhancing the accuracy of breast cancer surgeries and expediting the detection and diagnosis of prostate cancer.

We have an immense opportunity to enable sustainable change and positively impact patient care. In order to do this, we must mitigate the risks with a steadfast commitment to safe AI practices. We can embark on this journey confidently, adhering to standards and guidance that ensure the safe integration of AI in health care. This would allow health professionals to bridge the gap between cutting-edge technologies and the delivery of high-quality health services.